I did not write this (I hardly write any lotusScript any more), it was written by Antony Cooper, but he has kindly given permission to post it here as it is soo useful, a very nice little “Run from Menu” agent/function to enable you to run other agents directly on the server, making them much much faster, we use it for running migration and administration updates that need to change a lot of existing data, hope its as useful to you as it is to me.

%REM Agent Select and Run agent on server Created by Antony Cooper Description: Simple agent to build a list of agent in a database and then run the selected agent on the server %END REM Option Public Option Declare Sub Initialize() Dim ws As New NotesUIWorkspace Dim session As New NotesSession Dim db As NotesDatabase Dim nid As String, nextid As String, agName As String, msg As string Dim i As Integer, flag As Integer Dim doc As NotesDocument Dim arrName As Variant Set db = session.CurrentDatabase REM Create note collection Dim nc As NotesNoteCollection Set nc = db.CreateNoteCollection(False) Call nc.SelectAllCodeElements(false) nc.SelectAgents = true Call nc.BuildCollection arrName = "" 'Build array of agent names nid = nc.GetFirstNoteId For i = 1 To nc.Count 'get the next note ID before removing any notes nextid = nc.GetNextNoteId(nid) Set doc = db.GetDocumentByID(nid) If IsArray(arrName) Then arrName = ArrayAppend(arrName, doc.Getfirstitem("$title").Values ) Else arrName = doc.Getfirstitem("$title").Values End If nid = nextid Next arrName = QuickSort(arrName, LBound(arrName), UBound(arrName)) agName = ws.Prompt(PROMPT_OKCANCELCOMBO, "List of Database Agents", "Select the agent to run on this databases server", "", arrName) If agName = "" Then MsgBox "No agent has been selected, action cancelled.", 64, "User information" Exit sub End If If agName <> "" Then msg = "The agent you selected is '" & agName & '' Do you want to run this agent on the server now?' flag = MsgBox (msg, 32 + 4, "User information") If flag <> 6 Then Exit Sub End If Dim agent As NotesAgent Set db = session.CurrentDatabase Set agent = db.GetAgent( agName ) If agent.RunOnServer = 0 Then MessageBox "Agent ran", 32, "Success" Else MessageBox "Agent did not run", 64, "Failure" End If End Sub ' Function Quick sort. ' Sorts array '============================================================= Public Function QuickSort( anArray As Variant, indexLo As Long, indexHi As Long) As Variant Dim lo As Long Dim hi As Long Dim midValue As String Dim tmpValue As String lo = indexLo hi = indexHi If ( indexHi > indexLo) Then 'get the middle element midValue = anArray( (indexLo + indexHi) /2) While ( lo <= hi ) 'find first element greater than middle While (lo < indexHi) And (anArray(lo) < midValue ) lo = lo+1 Wend 'find first element smaller than middle While ( hi > indexLo ) And ( anArray(hi) > midValue ) hi = hi - 1 Wend 'if the indexes have not crossed, swap If ( lo <= hi ) Then tmpValue = anArray(lo) anArray(lo) = anArray(hi) anArray(hi) = tmpValue lo = lo+1 hi = hi -1 End If Wend ' If the right index has not reached the left side of array, sort it again If( indexLo < hi ) Then Call QuickSort( anArray, indexLo, hi ) End If 'If the left index has not reached the right side of array, sort it again If( lo < indexHi ) Then Call QuickSort( anArray, lo, indexHi ) End If End If QuickSort = anArray End Function

Old Comments

————

##### Hora_ce(10/06/2011 10:22:24 GDT)

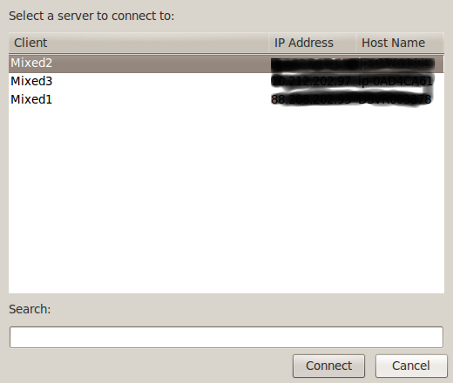

I’ve created something similar to this one… but in addition, the user can choose where to run the agent – on local or on server.

##### Mark Myers(09/06/2011 21:04:27 GDT)

you are correct, the time out is not applied for this method (one of its very reasons for creation as we have a very strict admin, who wont let agents run more that 10 mins which is often too short for a migration agent)

##### Sean Cull(09/06/2011 21:01:48 GDT)

just watch out, I am not sure that the server time outs protecting against infinite loops etc are enacted via this method – I once had one go for “some time” with no apparent way to stop it.

##### Mark Myers(10/06/2011 22:10:29 GDT)

cool, I take it the run local option is for scheduled agents rather than selecting them manually from the action menu? where is it posted?