Here at LDC towers we are quite a diverse bunch each member taking a primary role in a few technologies and then acting as secondary and tertiary to other members to back them up when needed, with people like Matt White and Ben Poole in the team handling the bulk of the

XPage work, my Primary UI skills were Spring web/view/mvc and Flex (as well as the classic domino we all still know like the back of our hands).

During this time I kept up on XPages by watching Matt’s videos on XPages101.net and following the rest of the blog spheres posts, but quite frankly demand has outstripped supply and now im Xpageing in anger so I figured a review on XPages was due for any body else who is late to the show ( I know you lot, some of you are still not doing proper Java in your apps!!!).

Sooo…what do I think?

Well frankly I like it, you produce really nice apps chock full of functionally, cleanly and at break neck speed, the only real hurdle is how you approach the development cycle and this depends on your point of view:

From a classic Domino persons point of view, XPages are not another component like pages or Navigators (remember them), they don’t work that way, think of XPages as a separate product that you have installed in your domino designer and server that provides you with a whole new layer of features, I found that it was easiest if I thought of them as an IBM plug in to the Classic Lotus product (which helped me resolve some of the integration miss matches and different ways of doing things).

From a none Domino person point of view: IBM have written another implementation of JSF (just like Spring Faces) and have glued it to a Legacy NoSQL database with integrated security and dedicated server platform to enable that platform to serve up content in an up-to-date form (just like they did with the HTTP task in 4.6).

Once you have your point of view and get down to writing code, you will find you don’t actually write XPages, they are just the final container, you actually write custom controls and then plug them into the relevant Xpage when you’re ready, like little UI and code modules sitting in a hierarchy (a bit like Spring MVC ) , understanding this is the corner stone to not tripping your self up. On the designer its self it’s obvious that the framework architects have been given considerably more time to get their sh*t together than the IDE designers and there is a lot of “you need to do that in the source view” or “change that in the ‘All properties’ section” , if you cant figure out how to do something, don’t worry they WILL have catered for it, it just wont be in the designer UI yet.

Lists are easyer to read than chunks of text so here are the good and bads of XPages as I see them.

Goods

- SSJS (server side java script): I love this, all the power of JavaScript running nice and securely at the back end with nearly all the functionally of @fomula’s added to it (makes me want to go of and learn node.js).

- Flexible: unlike other modern JSF/JSP frameworks, XPages thankfully inherits its ‘classic’ ancestors ability to be completely adaptable to what you want, which is a breath of fresh air from Spring in which you are basicly told “we know what’s best, so you cant do that”.

- The Security is still there, its still far easier that other frameworks to do good security and it inherits well from classic (though not perfectly).

- Expandability and plug-ins : IBM have gone with a constant upgrade path in which they develop add-ons which are released to the community as plug-ins which in turn will eventually get rolled into the main product ( the best example being the “XPages Extension Library” ) , giving you a nice balance of speed and supportability (thumbs up).

Bads

- SSJS: Its not ECMA complaint and I’ve already hit a few WTF moments when coding functions, also debugging is not the friendliest and please for the love of all that’s holy can I have a auto format key-shortcut.

- Still a fair amount of hacking: there is quite a lot of “how how the hell do I do that” then performing some strange convoluted action for dealing with simple problems , the old domino people are used to it but I can imagine it being a real head scratcher for new people.

- It has that IBM “I’m still slightly a prototype” feel about it, with a lot of IBM products you have the feeling they went “cool it works, ship it” while gently bypassing testing, now I know they are really trying (I got a tweet reply to me bitching about an error in about 30 mins) but still its an ongoing process.

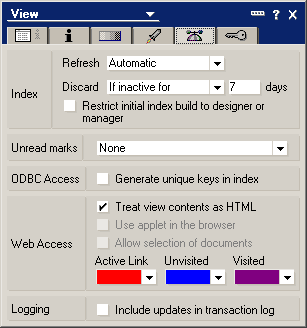

- The IDE is slow and hates to share with other developers at the same time (turn off automatic build), now I use plain eclipse most of the time as well as Adobe Flash builder and for my sins IBM RAD and all of them are faster than 8.5.3.

All in all, what with the Xwork server, if you can get a client to ignore the “lotus” memory then we have a real contender on our hands, I am building apps at the same speed (if not faster) as I used to with classic domino but they are easy to make look really good, are technologically up to date, and built with a great deal less hastle than with something like Spring, IBM just need to clean up the rough edges and convince us they are not going to dump the whole thing for pure connections.

Old Comments

Mark Barton(09/07/2012 09:35:35 GDT)

Thanks Mark some good stuff there and some valid points.

Lets hope IBM listen about the grumblings about DDE, I can’t imagine new developers would adopt it out of choice.

Would be interesting to see some best practice design patterns with regards to where the business logic for a component sits. Java over SSJS?

We both know it makes no sense to put all of your eggs in one basket with regards to technology and any client side developer worth there salt will be keeping up with the trends.

Jason Hook(09/07/2012 10:11:57 GDT)

My first project was a steep learning curve even having Java and JSP experience.

Now in later projects I’m leveraging an awesome amount of business logic I wrote in managed beans in that first project. Because of this my speed of development is rapidly increasing.

I completely agree with your sentiments on DDE. We deserve a much better IDE. There are plenty of great IDE’s: Visual Studio, CODA, Flux4. I feel that once you’re up and running with XPages a decent text editor with solid code complete might be better than DDE.

Managed beans are great! It means you are writing Java but frankly on the level that most of us need that’s not so hard. Must write down a tip or two on using them.

Scoped variables & being able to maintain state!

Geeks that build software for geeks, that’s IBM for me. Things always have that complicated & not quite finished feel, and then here comes the next shiny thing.

I know that Connections is the current big shiny thing but some of us work for small to medium sized enterprises that can’t justify that kind of spend. So to continue to maintain and grown market share in that space I expect that they (IBM):

Will continue to support & articulate clearly the future direction of XPages and XWorks;

Will focus on marketing the product so that it looks new and shiny for the next generation of IT Managers that will be buying the product;

Will reach out to Developers and Administrators to help the get the most out of the innovations they are making, rather than developing stuff and waiting for us to make sense of it. LUG’s seem like the perfect opportunity to do that.

I deliberately made those items sound more positive because they need the encouragement. I’ve invested huge amounts of time acquiring skills and I want IBM to match that commitment we are all making.

Must get back to work (writing the next application with XPages)!

Mark(09/07/2012 10:28:56 GDT)

bleeding heck Mr Hook that comment is longer than the post, thanks for putting the effort in, looks to check on after reading it

Mark: yes some standards would be a good idea.

Keith Strickland(09/07/2012 15:23:47 GDT)

“XPages are not another component like pages or Navigators (remember them), they dont work that way, think of XPages as a separate product that you have installed in your domino designer and server that provides you with a whole new layer of features”

That statement is key in clearing your mind of all the old Lotus Domino hacks we used to have to incorporate just to get a simple feature to work on the web. I’ve been saying this for a while now, XPages are not another design element, it is a new platform all together. The sooner someone accepts this the sooner they will start becoming productive with XPage development.

Mark(10/07/2012 05:49:11 GDT)

@keith yup yup

Mark(10/07/2012 12:37:53 GDT)

@michael one of the things i gauge DDEs speed against is how long it takes before I can code, rather than mealy open the app, that’s where DDE seems to suffer, YES my apps are nearly always on a server, but if its for a none XPage fix, I can normally open R7.0.4 do a minor fix and shut down, before 8.5.3 has got its knickers sorted out

Michael Bourak(10/07/2012 10:48:06 GDT)

Agree with much… but DDE slow is a mystery for me. On my laptop, 5 years old, 4Go Ram though, it’s fast…at least MUCH MUCH faster than RAD for exemple.

Are you sure you disabled automatic compilation ? Are you running against a server via a low end bandwith ?

On the same subject, I often see people complain about DDE stability. ON my laptop, it crashed maybe…5 times in 6 months of daily usage…

Michael Bourak(10/07/2012 13:12:21 GDT)

The majority of “wait time” is due to Java tools initializing (eclipse stuff) and time to get the project from network…

In 8.5.4 there is a new feature coming that will help keep DDE open and not relaunch / close it…(not sure I can say more…but I use it via beta version and like it a lot)

Ben Poole(10/07/2012 20:40:08 GDT)

The Java tooling in Eclipse does take time, but DDE still has its own pain points. I’ve found that there are a few things that make it faster:

1. Turn off automatic builds

2. Increase default JVM heap / max heap sizes

3. Ensure the host machine / VM has plenty of RAM

It’s still extremely slow compared with proper IDEs though

Michael Bourak(11/07/2012 09:09:33 GDT)

@Ben : running DDE or any disk intensive software inside a VM will suffer a lot from poor virtualisation disk performances…

Can you give sample of “proper IDE” ? If you mean notepad++, sure

On which OS are you running DDE ? What’s your config ?

Mark(11/07/2012 08:41:18 GDT)

@michael looking forward to 8.5.4!

@Ben yup, I took your advise on those bit a while back and it has helped a lot

Ben Poole(25/07/2012 22:33:01 GDT)

running DDE or any disk intensive software inside a VM will suffer a lot from poor virtualisation disk performances

Pish!

Re OS, you can run DDE on Win7 (64 bit), Win7 (32 bit) and WinXP. Doesn’t matter, it will still be slow.

As for “proper” IDEs, there are a few out there:

– Visual Studio

– Eclipse (vanilla)

– Netbeans

but yes, generally my preference is for the simpler tools like Coda 2 and Sublime Text 2.