Workshops or the simple act of sitting down with the client or customer in a single room and thrashing out a problem are absolutely invaluable in any decent sized project.

They are a necessity both for actually getting the feel of a given bit of work and figuring out local issues 1, to building rapport with the business so they know that you’re all on the same side when trying to deliver.

The only thing is, timing. The human element of a workshop, the rapport side of it demands that we should do them straight out the gate, to provide a good first impression, “we are your kind of people.” “Tell us what you need, and how we can help.”, all that kind of stuff dictates that you should do a workshop first thing in a project.

But from a technical and practical side this does not work, because you haven’t turned over all the rocks and looked under them, everyone is fresh to the project and enthusiastic, however very little of the true cost or issues that a project is going to face will come up now, and frankly from a moral and engagement point of view you don’t want the cynical techs raising such points in initial calls.

This is one of the reasons why when you have large consulting houses come in and do an evaluation of an infrastructure or what have you, they always miss things. It’s not their fault, things simply have not been found.

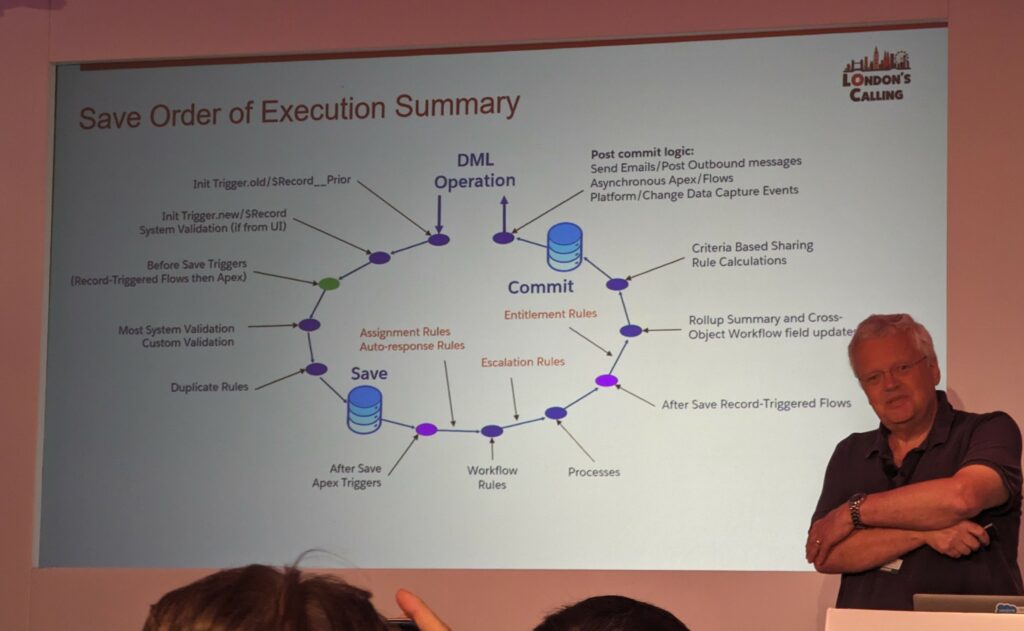

You need to go down into the project’s dependant systems, turn over every rock, find the real people who are doing things rather than the people who were introduced as in charge, find all the nooks and crannies where the Dragons be, before you actually have a true visualisation of any existing system or processes.

So, when I’m doing large scale projects, I would recommend two sets of workshops. An initial workshop that is human focused, that develops rapport, and gives initial starting positions and works out where people hope to end up.

Then further down the project timeline, book in a review workshop, or “step back” workshop to be done once you’ve had a few months in, and the techs have had a chance to talk to each other, you’ve had a chance to thrash out real processes, found those hidden systems that everyone had forgotten about. Found out the people that do all the little manual interventions to make a process run in the real world

After that, put those into the project plan and cater for any knock back they will cause. This is the crucial point, you will have planned for these knock backs and timing changes up front, this gives the client the appreciation and understanding that you have been down this road before, you know that this is what’s going to happen, can predict it and roll with it to still deliver on time, and should you do the review workshop and don’t find anything new, then it can be used as a back patting exercise for the client, shows everyone how well they knew their stuff to start with, and how pleasurable they are to work with.

So yes, I would always include two sets of workshops.

- if it’s a large international corporation[↩]